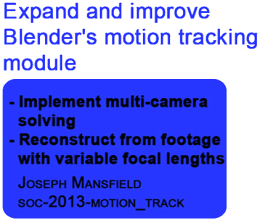

Blender Git Statistics -> Branches -> soc-2013-motion_track

"Soc-2013-motion_track" branch

Total commits : 48

Total committers : 2

First Commit : June 12, 2013

Latest Commit : September 23, 2013

Commits by Month

| Date | Number of Commits | |

|---|---|---|

| September, 2013 | 26 | |

| August, 2013 | 10 | |

| July, 2013 | 10 | |

| June, 2013 | 2 | |

Committers

| Author | Number of Commits |

|---|---|

| Joseph Mansfield | 47 |

| Campbell Barton | 1 |

Popular Files

| Filename | Total Edits |

|---|---|

| libmv-capi.cc | 21 |

| keyframe_selection.cc | 12 |

| libmv-capi.h | 11 |

| bundle.cc | 10 |

| modal_solver.cc | 9 |

| tracking.c | 9 |

| libmv-capi_stub.cc | 7 |

| reconstruction.cc | 7 |

| initialize_reconstruction.cc | 7 |

| pipeline.cc | 7 |

Latest commits

September 23, 2013, 14:51 (GMT) |

Code cleanup: remove a duplicate define |

September 18, 2013, 22:21 (GMT) |

Remove multicamera solver option Reverting the previous commit because Sergey threatened puppies with an axe for every time I add an option. |

September 18, 2013, 13:32 (GMT) |

Add checkbox for multicamera solving To enable reconstruction using tracks from multiple cameras, the "Multicamera" checkbox in the Solve panel should be checked. This is currently non-functional. |

September 18, 2013, 12:58 (GMT) |

Add simple panel for creating multicamera correspondences The create correspondence operator is currently non-functional, but it is expected that it will create correspondences between tracks in different movie clips. |

September 18, 2013, 09:25 (GMT) |

Allow empty intrinsics vector for bundle adjustment If an intrinsics vector is passed to the bundle adjuster with size less than the number of cameras, the vector is padded out to that size with empty intrinsics. |

September 18, 2013, 01:33 (GMT) |

Multicamera-ify bundle adjustment Bundle adjustment now uses the appropriate camera intrinsics when calculating the reprojection error for each marker. Refinement options are currently only applied to the camera intrinsics for camera 0 (all other camera intrinsics are made constant). |

September 14, 2013, 11:11 (GMT) |

Merging from trunk r60137 into soc-2013-motion_track |

September 14, 2013, 11:01 (GMT) |

Fix crash with fetching all reconstructed views |

September 14, 2013, 10:08 (GMT) |

Scale all camera reconstructions to unity |

September 14, 2013, 08:46 (GMT) |

Multicamera-ify reconstruction initialization Initialization can now reconstruct from two images regardless of the source camera. |

September 12, 2013, 16:16 (GMT) |

Modify keyframe selection algorithm to be camera-aware The keyframe selection algorithm can now select keyframes from the camera passed to it as an argument. It's worth looking into multicamera algorithms too. |

September 12, 2013, 14:45 (GMT) |

Improve modal solver image iteration Instead of iterating over all images and filtering through only those from camera 0, iterate over only camera 0 images. With the current way markers are stored, this is very computationally inefficient, but this should be improved soon. |

September 12, 2013, 14:09 (GMT) |

Use multicamera terminology for initial image selection To initialise reconstruction, two images need to be selected with high variance. The GRIC and variance algorithm can continue to refer to the images as keyframes, as it is intended to work with images from a single camera, but the libmv API should be more general than that, referring to selected *images*. This is a non-functional change. |

September 12, 2013, 00:13 (GMT) |

Modify modal solver to use only camera 0 Match recent changes to how tracks are stored. |

September 11, 2013, 14:48 (GMT) |

Clearer association between markers and cameras As the camera identifier associated with a marker is simply bookkeeping for multicamera reconstruction, make it an optional attribute when handling tracks and reconstructed views. This means that the libmv API is still nice for users that don't need to associate images with cameras. |

September 11, 2013, 11:18 (GMT) |

Merging from trunk r60041 into soc-2013-motion_track |

September 11, 2013, 10:34 (GMT) |

Fix: BKE tracking compilation error Previous commit was incomplete and didn't include blender-side changes to the libmv API. |

September 10, 2013, 21:07 (GMT) |

Modify data structure for storing tracks The Tracks data structure stores Markers. The current design has it so that a marker's image identifier denotes the frame from the associated camera that the marker belongs to. That is, it treats the image identifier more as a frame identifier. You could have markers in image 0 for camera 0 and image 0 for camera 1 and they wouldn't be considered to be in the same image. However, this design doesn't make much sense because the reconstruction algorithms don't care about frames or the cameras from which a frame came, only that there is a set of images observing the same scene. A better design is for all frames from all cameras to have unique image identifiers. The camera identifiers can then denote a subset of those images. No image captured by camera 0 will have the same image identifier as images from camera 1. This way, the algorithms can continue to just treat all frames as one big set of images and the camera identifier provides the association of an image to the camera it originated from. This makes it much easier to implement multicamera reconstruction without significant changes to the way libmv behaves. |

September 10, 2013, 10:57 (GMT) |

Update doxygen comments for reconstruction initialization EuclideanReconstructTwoFrames and ProjectiveReconstructTwoFrames currently assume that the passed markers belong to a single camera. That is, we're not reconstructing frames from different cameras. Ideally, this restriction should be removed. |

September 9, 2013, 14:50 (GMT) |

Code cleanup: Google-style constant names Google style guide recommends kConstantName for constants. |

MiikaHweb - Blender Git Statistics v1.06

MiikaHweb | 2003-2021

MiikaHweb | 2003-2021